- Published on

content moderation and loving surveillance.

- Authors

- Name

- Amanda Southworth

return content moderation and loving surveillance.

return content moderation and loving surveillance.

A social media company grows long term, not only by incentivizing content, but by moderating content. A successful content generating platform is an incentive network: there are consumers of content, and the creators who receive some sort of reward for engaging with the community.

A good social media platform doesn’t let all content get the same amount of attention. Content is wide and vast, and content that will get the most engagement is given the most reach. When the algorithm doesn’t interest us, we leave to other algorithms that do. Curation is a necessary part of creating a community, and providing value through the giant surplus of content generated.

Content moderation is considered a beneficial penetration of privacy and a layer of surveillance necessary to keep the experience healthy for everyone.

It gives us an anxiety free experience, and keeps our online spaces safe. It also works to protect the PR and legal interests of the company. It can act as a ‘referrer’ to law enforcement, and other human service platforms to get users in danger the help they need.

Albeit a central part of the experience, content moderation is no easy task for platforms. Automated systems have rules that can easily be by-passed.

Most TikToks these days don’t say ‘suicide’ or ‘sex’, but ‘self undo’ or ‘seggs’. These keyword mis-spellings are highly variable to the given community, and something I’ve even partaken in to avoid triggering ‘shadowbanning’. Automation creates clear cut rules that people learn to avoid.

Getting shadowbanned means you trip content moderation systems, and thus the visibility of what you post is hidden, or downplayed to an extreme degree to the point that your account is not seen by others. Akin to every other other of human behavior, people want the algorithm to promote them and not to punish them. Of course, they act to evade surveillance accordingly.

A photo of a beautiful night sky in Sequim, WA to break this up, otherwise this is a chunky essay and it is in my best interest to keep you engaged.

The majority of content moderation is autonomous and based on user reporting, but models do not catch everything. Things from avoiding keywords, to lack of cultural content make things easily slip under the radar that would otherwise be clearly marked as harmful.

For these special cases, human intervention is needed to review content. That human intervention comes at a deep cost.

Having a moderation system that can be human validated is the only way to ensure nothing falls through the cracks. But, the people who review content moderation are underpaid, and over-traumatized. They watch videos of execution, rapes, assaults, beatings, terrorist attacks, and more.

In some ways, they’re similar to first responders. What we don’t see behind the polished UX of feeds and forums, are contractors and employees hidden in a room, watching the worst of humanity’s capabilities to feed themselves and their families. And despite the strife and important work they do, they’re not infallible.

Most of them are also working in cultures that they don’t have contexts for, leaving things to fall through the cracks. Languages they can’t translate, or media they can’t clearly make sense of as harmful can slip past them.

That leaves the last pillar: self content moderation tools. These are tools that content creation companies give directly to the users in order to improve their experience, and to reduce the stress of using the platform.

These are the ones you’re familar with, and expect: blocking, muting, turning off comments, limiting keywords. All of these things enable the user to control their environment on their account, but without affecting the greater network beyond the reach of the user.

Pinterest also has a unique content moderation tool, where they don’t allow you to look for content related to pieces of content they’ve identified as “harmful”.

My pinterest board relating to my C-PTSD emotions. Note how it does not allow me to add “More Ideas” at the top of the content grid.

See my garments board, which allows me to look for more ideas related to the content.

Content moderation is a symptom of the issue, but it doesn’t actually prevent the problem.

Stopping someone from posting about their suicidal thoughts doesn’t make them go away. Hiding comments in a celebrity’s post about their body does not stop people from dissecting it in other platforms.

Content moderation will always be a game of catch-up, where the wins are hiding something from others that is ongoing and every failure is seemingly catastrophic.

But, content moderation also gives these platforms a chance to deploy their answer to a question everyone is asking. What is a content generation platform’s responsibility to the greater world it exists in?

There’s some areas where the answer is clear cut and dry. If a platform is a method of perpetuating content relating to terrorism, death, sexual abuse, and more, that is a strong line in the sand. There are rules and limitations to usage of platforms for criminal activity, and duty of access stops there.

But past that legal barrier, everything else is dependent on that platform alone. It reduces down to their culture, their shareholders, their users, and their tolerance for risk.

More recently online, you hear the term “platforming” and “deplatforming” to refer to someone’s access to the platform as if it’s a a stage. Being on that platform is a privilege that should be taken away or given dependent on behavior.

The thinking goes — If we like a message, we should platform the voices who will share it. If we don’t, we should “deplatform” that person to make it harder for them to achieve their means. Having access to the microphone of the internet is no longer seen as something everyone should have by default, but something that people get and maintain if they continue pro-social behavior.

In many ways, it echos the social contract of the real world into online terms. And by proxy, platforms also must write their line in that social contract.

That’s a very rose tinted glasses view of content moderation. For the most part, content moderation is just removal and remediation of risk — it is not an ethical stance or a differentiator in a crowded market.

This has direct implications to users who have mental health issues. Some of these are visible, like posting a vast amount of content during a manic episode. Other actions are more subtle, like looking at eating disorder content or content about drinking.

This is primarily true when it comes to content that deals with suicidal people. It’s not so much a matter of reducing the risk to the person themselves as opposed to directing them towards other resources that act as authorities in the space. Most mental health keywords on social media sites cause them to hand off the user to a prominent resource within the space.

If a user is mentally unwell, do we limit their access to tools that can harm them? To put it colloquially, should we “deplatform” them? Every platform takes a different approach to this. Reddit, Google, and Instagram make you bypass a message with a hotline on it to access the content.

Some platforms have features where you can report people for suicidal / harmful behavior and an automated message reaches out and checks in on them.

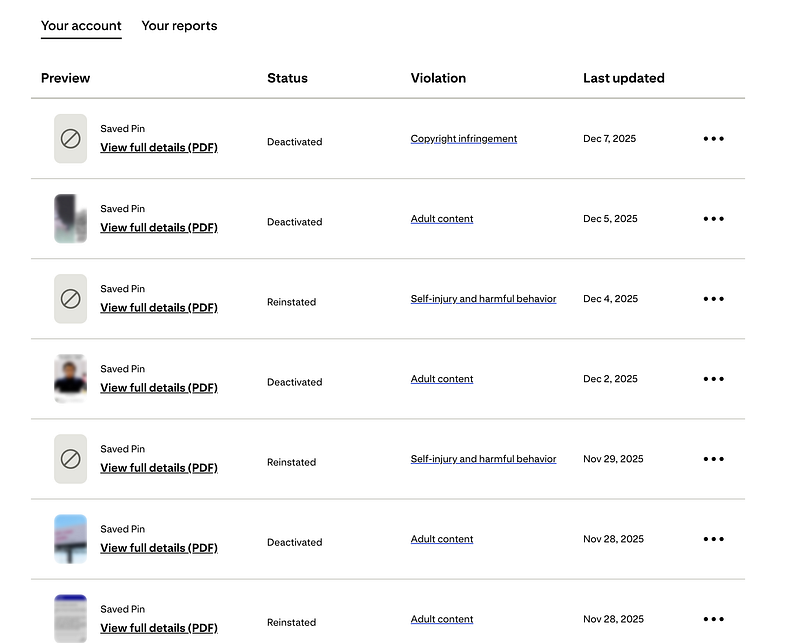

Pinterest just straight up tells you to stop spreading the information by putting a violation on your account if you engage with ‘harmful’ or copyrighted content. If you get enough violations, your account is deleted.

My pinterest account’s “Reports and Violations center”. I get a new violation on pins I have saved for self-injury or adult content about 2x a week, if not more. I get an email often saying my pins are naughty, and that I need to stop saving them unless I want my account to be deleted.

Although not directly social media, generative AI products are a form of content-generation platforms. And currently, ChatGPT is going through a PR nightmare of people committing suicide or murder after talking to their chatbots.

The context of engaging with a chatbot is different from other content generation platforms, which makes moderation much more unique and difficult.

Compared to posting on a platform or online spaces where there’s a general view to other 3rd parties, a chatbot is a closed loop, and one where a human can develop connections and a relationship with a computer system.

The first sign of frost for chatbots was when The National Eating Disorder Association shuttered its’ live helpline staffed by humans and replaced it with

I would argue generative AI chatbots, or any chatbots are responsible for providing incorrect information to users in moments of pain, especially because of this closed-loop behavior. A chatbot is reenforcing or sharing information, in an isolated context, and is responsible for being a source of authority and correct information.

It could also be argued that those experiencing mental health issues might engage with chatbots more frequently, since they may feel socially isolated, scared of shame, or different to others. Those factors alone make them vulnerable to suicidal thoughts, and more likely to use a chatbot in the first place for companionship.

But — the lack of human association and review with a chatbot is what makes people so comfortable with giving it this information in the first place.

They go to a chatbot to never be rejected, to never be pushed away, and to have a friend who is always “ready” to support them and encourage them. It makes sense that someone who is suicidal may want to hear a chatbot echo something to build up the validation to attempt suicide.

Well, shouldn’t we just use hotline crisis data to retrain the model and solve this the easy way?

To be clear: are chatbots responsible if they actively encourage suicidal behavior? In my mind, absolutely. But the solutions are not immediate, and not all encompassing.

To me, the chatbot alone is not responsible for someone’s death entirely. Certainly responsibility is shared, but ChatGPT was sought out as the confidant for a reason, and while encouraging the behavior didn’t help, I don’t think it is entirely the responsibility of ChatGPT.

There is no doubt in my mind that many factors coalesced into a terrible outcome for everyone involved, and I hesitate to speculate more out of respect for those grieving.

A graphic from CNN’s reporting on Zane Shamblin’s suicide. This is Zane’s final message with ChatGPT.

The ideology of these articles / lawsuits are clear: these platforms have a responsibility to warn us or to re-direct this pain, and they didn’t. It is a way to publicize a stance with public shaming, in an effort to change it.

The recent lawsuits about the 16 year old who committed suicide after discussing it with ChatGPT made me reminisce on a similar story.

The themes in the lawsuit about companies needing to provide “surveillance” on user’s mental health were present in Sue Klebold’s story. She was the mom of Dylan Klebold, a teenager who was one of the two Columbine shooters. He ultimately killed his classmates, and then took his own life.

She wrote a book called “A Mother’s Reckoning” about the shooting from her perspective, and what they wish they did differently as Dylan’s parents.

The prominent theme aching throughout her book was her shame in regards to failing to surveil. The narrative that she and these discussions about AI chatbot suicides drive is that terrible things can be stopped if we just know someone is talking or thinking about them about them, or if we redirect them to a resource.

To be clear, I’m not covering the chatbot encouraging such behaviors in this opinion.

But the thought is clear, that if we enact “loving surveillance”, we have a chance at intervention and changing something that otherwise was fate.

The New York Skyline from Martha P. Johnson State Park.

Despite surveillance, gathering information about someone’s mental state does nothing to actually fix it if they don’t have others to talk to, can’t afford therapy, have parents that don’t care, or police stations that do not act on information.

The other Columbine shooter, Eric, had a website talking about harming people that was reported to a police station to no resolution prior to the Columbine shooting.

Surveillance fails when it meets broken systems, and more surveillance is a heavy cost with no true path to guaranteed safety. Some surveillance is security theatre: it makes you feel safe, and gives you the illusion that something is being overseen. That someone can be notified, and intervened, or a resource can be shared that will change the trajectory of an otherwise abhorrent outcome.

And in many ways, content moderation of those who are experiencing self-harmful behaviors is surveillance of a problem that can never be solved by the platforms who see it first hand.

The responsibility belongs to more than these platforms. It just disproportionately falls onto them, because others may feel these platforms have not held up their end of the social contract to mentally unwell users.

Online platforms have taken up time reserved for family, friends, and connection. We want to think that these platforms that help us connect will also help us surveil those who are unwell, and when we feel that responsibility is not met, we feel left in the dark by a tool that was supposed to unite people and improve lives.

But a platform is a private company, and also a thing of limited scope and care. It will never replace a community member in the moments when we need it to, and it can only remove content or refer people to other community resources.

But irregardless: those are things that cannot fundamentally affect the trajectory of a user intent on self harm, or suicidal actions without the user’s willingness to be helped. All we can do is stop from pushing users towards more harm, but we can’t hold them back from committing that harm in the first place. We just stop them from having an outlet to share it.

return Of course, chatbots should not be encouraging suicide or homicide, or ever providing justification for it. But also, we shouldn’t “deplatform” users from the only points of connection they may have in their life entirely. We can redirect a chatbot from encouraging negative behaviors without pushing them to hollow resources with a generic message of empathy.

return Of course, chatbots should not be encouraging suicide or homicide, or ever providing justification for it. But also, we shouldn’t “deplatform” users from the only points of connection they may have in their life entirely. We can redirect a chatbot from encouraging negative behaviors without pushing them to hollow resources with a generic message of empathy.

It’s worth thinking about this in the lens of the “Swiss Cheese“ analogy often used in safety engineering. Terrible things happen not just when one thing fails, but when multiple things at multiple times cascade fail and result in a fate that’s been building in silence. Content moderation is a single hole in the cheese.

Are we certain that removing access to these pieces of content, or these chatbots will meaningfully change the trajectory of choices? Or do we just want to reduce any possible responsibility for mismanaging someone’s pain, so much so that we would rather leave them alone with their thoughts than leave them with an outlet that affirms their ideations?

Private companies should be responsible for doing what they can, but they should not be held to account for the failure of systems and communities.

But with the US healthcare system becoming more expensive, and mental health crises continuing to shift upwards, it’s more likely than ever that the internet is that last “safe” true place.

The internet is a space where you go to escape yourself and the true world around us, and also to see yourself reflected. Social media and the internet is extension of and a replacement for community. It becomes hard to know where the burden of responsibility is and what the limits of software to help others should be.

Those limits only get more blurry when companies enter the picture. Companies who may have financial incentives to NOT disengage users to continue generating revenue if they’re paid users.

Content moderation is the battle lines for where this plays out, and every company draws its’ own boundary.

But removing content doesn’t remove the emotion. Directing someone to a resource is not absolving your hands of the responsibility. Just because something is not visibly allowed to be shared or discussed on platforms or with chatbots doesn’t mean it doesn’t fester in the background.

The internet is a place of beautiful and terrible expression. People, regardless of their experiences online, will continue to experience mental health issues and will share those with platforms. It’s when that’s the ONLY method of intervention that we truly fail.

Even if software catches a crisis and reports it to us — if we as a community don’t respond with proper care, we have paid too great of a price for alerting ourselves to something that we weren’t able to change after all.

Ending this essay on my cat in bullet train mode because it’s been quite heavy.